Penn Medicine Academic Computing Services was recently formed through the consolidation of several of the largest groups on campus providing computing services to departments, centers and institutes. Its role is to ensure that data, informatics, computing infrastructure, and technical expertise is readily available to grease the wheels of science and clinical care at Penn Medicine. We will occasionally bring you stories of their expanded role. I recently spoke with Brian Wells, associate vice president of Health Technology and Academic Computing and Ben Voight, assistant professor of Pharmacology and Genetics, about an unusual application.

Penn Medicine Academic Computing Services was recently formed through the consolidation of several of the largest groups on campus providing computing services to departments, centers and institutes. Its role is to ensure that data, informatics, computing infrastructure, and technical expertise is readily available to grease the wheels of science and clinical care at Penn Medicine. We will occasionally bring you stories of their expanded role. I recently spoke with Brian Wells, associate vice president of Health Technology and Academic Computing and Ben Voight, assistant professor of Pharmacology and Genetics, about an unusual application.

Uncovering the genomic architecture of spider silk genes wasn’t top of mind for Benjamin Voight, PhD, when he first came to Penn a few years ago. But he and postdoctoral researcher Paul Babb are now deep into sequencing the whole genomes of two spider species: a Golden Silk Orb Weaver and Darwin's Bark Spider.

The de novo assembly of the whole genome of these two species is “like putting together a jigsaw puzzle without the box-lid picture to guide us.” says Voight, assistant professor of Pharmacology and Genetics. And a substantial amount of computing power is needed to do it, so Voight consulted with Brian Wells, associate vice president of Health Technology and Academic Computing, on the building and design of the new High Power Computing Cluster (HPCC) for this project.

“Spider silk is an impressively strong biomaterial – the tensile strength of steel, but a fifth of the weight. Learning about all the genes involved seemed like the place to start when thinking about biomedical materials applications,” says Voight. “When my lab isn’t studying the basis of complex human disease, we’re using our skills in computational biology to tackle this project.”

The Voight team is also collaborating with two arachnid biologists from the University of Vermont (Ingi Agnarsson and Linden Higgins), through a bit of the serendipity of science. When Voight was at a 2010 diabetes meeting in Oxford, UK, an arachnid biologist from Vermont emailed him about featuring one of his papers on diabetes for a journal club. Noticing her background in arachnology, he responded to her questions about his diabetes paper and casually inquired if a spider genome had been completed. “Unbelievably, I learned there was no complete genome sequence of any spider available. The closest is of spider mites – more like a pest than an actual arachnid. I thought someone should do it – and reasoned it might be something my lab could do one day.”

At a separate meeting several months later, Voight had a second chance conversation with a VP from a gene sequencing company about his idea to sequence and assemble the first spider genome – convincing enough to have secured from them the sequencing regents needed to make the project a reality.

Form, Function, and Sequence

Different spider species produce different types of silk and webs according to function – the inner web is sticky, the dragline is strong, and the spun egg case has yet a different set of properties. For example, the stream-crossing draglines of a Darwin's Bark Spider web have to be extraordinarily strong, even for ‘conventional’ spider silk. “We want to connect sequence features from our spider genome to the different biomolecules in the web components, as well as to the glands that produce each silk type. With draft genomes, we can ask questions like: What are the different genomic properties that contribute to differences in spider silk? How have they evolved along the arachnid lineage?” Voight explains.

“To find out how nature solved the web-weaving engineering question, we are sequencing the DNA as well as the RNA transcriptome sequence, which leads to understanding the proteins that make up silk,” he says. “We will soon have a first draft of the DNA sequence for both species.”

To achieve precision, genetic material from tissue samples must be run through a gene sequencer. Genetic sequences are made up of long strings of four letters (T,C,G,A) that represent the chemical building blocks of DNA, and today’s machines can generate a hefty number these strings. However, the process takes days to weeks, depending on how much of the genome is being “read” by the sequencing machine. The amount of data generated is also tied to the breadth and depth of the reading process. Data volumes can range from gigabytes (one billion characters) to terabytes (one trillion characters) per sample.

To construct a new genome without a known reference for comparison is a challenging computation. The primary job is rather simple at face: compare each sequence generated by the machine against every other sequence, in order find those that overlap with each other. “We have to find the two jigsaw puzzle pieces that fit together,” says Voight. “These pieces combine together to eventually build long, continuous stretches of DNA that form the initial scaffolds of a draft genome, where the ‘picture’ of the puzzle begins to become visible.”

This simple task is easy for a small number of pieces – any puzzle player has gone through exactly this at some stage. However, hundreds of millions of pieces are generated by the machine and all need to be compared to each other – far too many for a single human being to do, or even a single computer. This is where parallel computing and the HPCC enter the fray.

Depending on the length of the sequences – complete spider genomes are estimated to range from two to four billion letters – a single assembly process takes weeks. “Imagine trying to compare the content of two different revolutionary war history textbooks looking for similar patterns of words,” says Wells. One way to shorten the process is to break up the alignment procedure into multiples and run them at the same time on many computers. This is ideally suited to the massively parallel processing capabilities of the HPCC.

The Philadelphia Navy Yard Technology Park is home to the HPCC, literally a warehouse with nine refrigerator-sized racks covering approximately 250 square feet and over 14 million virtual core-hours of processing time per year. (An Earth year is a mere 8,765 hours.)

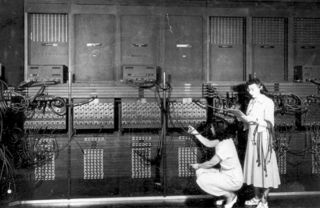

Just to put all of this computing power and space into perspective, the first programmable electronic computer – the famed ENIAC -- built in partnership with the U.S. military on the Penn campus in the 1940s, used about three times the floor space as the HPCC (which weighs in at 10,000 pounds) and was about five times heavier. Imagine that -- using a five-ton machine to decode one of lightest yet strongest of natural materials. The seemingly incongruent nature of science never ceases to amaze.

Just to put all of this computing power and space into perspective, the first programmable electronic computer – the famed ENIAC -- built in partnership with the U.S. military on the Penn campus in the 1940s, used about three times the floor space as the HPCC (which weighs in at 10,000 pounds) and was about five times heavier. Imagine that -- using a five-ton machine to decode one of lightest yet strongest of natural materials. The seemingly incongruent nature of science never ceases to amaze.